I got interested in this topic after seeing a pulmccm.org post on 4 studies of the matter this morning. "They" are trying to convince me that I should be applying NIPPV to obese patients to prevent....well, to prevent what?

We begin with the French study by De Jong et al, Lancet Resp Med, 2023. The primary outcome was a composite of: 1. reintubation; 2. switch to the other therapy (HFNC); 3. "premature discontinuation of study treatment", basically meaning you dropped out of the trial, refusing to continue to participate.

Which three of those outcomes mean anything? Only reintubation. I actually went into the weeds of the trial because I wanted to know what were the criteria for reintubation. If you have some harebrained protocol for reintubation that is triggered by blood gas values or even physiological variables (respiratory rate), it could be that NIPPV just protects you from hitting one of the triggers for reintubation. You didn't "need" to be reintubated, your PaCO2 which changed almost imperceptibly (3mmHg) triggered a reintubation. These triggers for intubation are witless - they don't consider the pre-test probability of recrudescent respiratory failure, and this kind of mindless approach to intubation gets countless hapless patients unnecessarily intubated every day. Alas, the authors don't even report how the decision to reintubate was made in these patients.

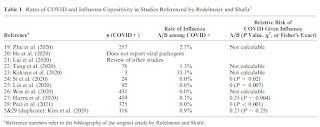

But it doesn't matter. Prophylactic NIPPV doesn't prevent reintubation:

As you can see in this table, the entire difference in the composite outcome is driven by more people being "switched to the other study treatment". Why were they "switched?" We're left to guess. And being a non-blinded study, there is a severe risk that treating physicians knew the study hypothesis, believed in NIPPV, and were on a hair trigger to do "the switch".

But it doesn't matter. This table shows you that you can start off on HFNC, if you look at the doctors cross-eyed and tachypneic, they can switch you from HFNC to NIPPV and voile! You needn't worry about being reintubated at a higher rate than had you received NIPPV from the first.

Look also at the reintubation rates: ~10%. They're not extubating people fast enough! Threshold is too high.

So we have yet another study where doing something to everyone now saves them from the situation where a fraction of them would have had it done to them later. Like the 2002 Konstantinidis study of early TPA for what we now call intermediate risk PE. In that study, you can give 100 people TPA up front, or just give it to the 3% that crumps - no difference in mortality.

I won't do it. Because people are relieved to be extubated and it's a buzz kill to immediately strap a tight-fitting mask to them.

Regarding the Thille studies (this one and this one): I'm already familiar with the original study of NIPPV plus HFNC vs HFNC alone directly after extubation in patients at "high risk" of reintubation. The results were statistically significant in favor of NPPV with 18% reintubated vs 12%. And, they did a pretty good job of defining the reasons for reintubation - as well as anybody could. But these patients were all over 65 and had heart and/or lung diseases of the variety that are already primary indications for NIPPV. And the post-hoc subgroup analysis (second study linked above, a reanalysis of the first) focusing on obese patients shows the same effect as the entire cohort in the original study, with perhaps a bigger effect in overweight and obese patients. But recall, these patients have known indications for NIPPV, and we've known for 20 years that if you extubate everybody "high risk" to NIPPV, you have fewer reintubations. (The De Jong study, to their credit, excluded people with other indications for NIPPV in that study, e.g., OHS.) The question is whether you want an ICU full of people on NIPPV for 48 hours (or more) post-extubation with routine adoption of this method, or whether you wanna use it selectively.

Now would be a good time to mention the caveat I've obeyed for over 20 years. That's the Esteban 2004 NEJM study showing if you're trying to use NIPPV as "rescue therapy" for somebody already in post-extubation respiratory failure, they are more likely to die, probably because of delay of necessary reintubation and higher complication rates stemming therefrom.

Now, whenever I can get access to the Hernandez 2025 AJRCCM study, which is firewalled by ATS for this university professor (who is no longer an ATS member, having rejected the political ideology that suffused the society), I will do a new post or add to this one.